Some of my reading notes for the paper "An operating system for multicore and clouds: mechanisms and implementation"

Things I liked and that were interesting

It was interesting to learn that in future manycore systems the number of cores will exceed the number of processes. In connection with this the authors say that it is necessary to do space multiplexing instead of time multiplexing. I think this is a very big shift in thinking about computing power, as one has to think about how to map cores to processes, instead of time slicing one CPU to achieve multi-tasking.

It was also interesting that the authors presented VMs as a limiting factor. They argue that VMs provide an additional layer of indirection that make it more difficult to have a global picture of all the resources.

Limitations and Problems I had

I think it was strange that the authors tried to come up with a solution for both multicore and cloud computing. I prefer the approach of Barrelfish, where the researchers only focus on multicore and were thus able to come up with a more thorough solution in my opinion.

I also felt that the topic of security and isolation was left out in the paper. They talk about the disadvantages of VMs and state that their system provides a uniform view of all global system resources. However, one of the main benefits of having VMs is that they provide protection, and it would have been helpful if the authors discuss how protection was ensured when there was only a single system image OS. For example, in case of a compromise of fos, is there some mechanisms to do damage control, or is the attacker inevitably able to access all resources of the whole cloud?

Saturday, April 27, 2013

Thursday, April 25, 2013

Reading Notes: multikernel

Some of my reading notes for the paper "The Multikernel: a new OS architecture for scalable multicore systems".

Things I liked and that were interesting

I found it interesting that the authors decided to incoorperate ideas from distributed systems and networking into their OS. By regarding each CPU core as an independent unit and only using message passing, they said that they could exploit insights and algorithms from distributed systems.

I also found it interesting that they chose to make OS structure hardware neutral. At first this statement didn't make much sense to me, because one would think the OS is the most hardware dependant layer. Then they explained that by this they meant the design decision to separate OS structure as much as possible from the hardware specific parts (messaging transport system and device drivers). I think if one would really succed in making it as hardware neutral as possible, this would significantly facilitate the OS development process.

Things I liked and that were interesting

I found it interesting that the authors decided to incoorperate ideas from distributed systems and networking into their OS. By regarding each CPU core as an independent unit and only using message passing, they said that they could exploit insights and algorithms from distributed systems.

I also found it interesting that they chose to make OS structure hardware neutral. At first this statement didn't make much sense to me, because one would think the OS is the most hardware dependant layer. Then they explained that by this they meant the design decision to separate OS structure as much as possible from the hardware specific parts (messaging transport system and device drivers). I think if one would really succed in making it as hardware neutral as possible, this would significantly facilitate the OS development process.

Wednesday, April 24, 2013

A Hello-World program in Unix V6 on the SIMH simulator

In my previous post I've talked about how to get Unix Version 6 to run in the SIMH PDP-11 simulator. Now that you have Unix V6 up and running, there is some nice hacking you can do. Here's the classic "Hello World" program in retro '70s style.

System setup

At this point you should have successfully started up and logged in as root into your Unix V6 system on the SIMH pdp11 simulator. For instructions on how to do that, read here. Once everything is correct, you should be greeted by the root prompt "#".

This where the times of ed

So in Unix V6 we're actually in a pre vi and emacs world. So the standard editor that was available at that time was ed (a so called line editor) which you will find even less intuitive to use than vim or emacs.

So here's how to write a Hello-World C program with ed and compile with cc:

System setup

At this point you should have successfully started up and logged in as root into your Unix V6 system on the SIMH pdp11 simulator. For instructions on how to do that, read here. Once everything is correct, you should be greeted by the root prompt "#".

This where the times of ed

So in Unix V6 we're actually in a pre vi and emacs world. So the standard editor that was available at that time was ed (a so called line editor) which you will find even less intuitive to use than vim or emacs.

So here's how to write a Hello-World C program with ed and compile with cc:

Reading Notes: x86 Virtualization

Virtualization has gained a lot of attention in recent years. Here are some of my reading notes on the paper "A comparison of software and hardware techniques for x86 virtualization"

Things I liked and that were interesting

It was interesting to see that from this paper’s results software beats hardware. Usually the perception is that hardware is faster, but in this case of virtualization the software makes it possible to devise workarounds for the few difficult cases while being efficient on most normal cases. In the hardware approach however, the hardware has to be designed to handle all worst-case scenarios (for example throwing traps for all privileged state accesses) and is thus difficult to implement.

It was also interesting to see how virtualization was made possible on the x86 which was not originally designed for virtualization. The technical details on binary translation show that it must have been a very difficult engineering process for VMWare to make virtualization possible on the popular x86 platform.

Limitations and Problems I had

While the paper shows that software virtualization is still superior right now, once hardware manufacturers improve their virtualization support, binary translation might be in danger of becoming superfluous. When hardware naturally implements the complicated mechanisms related to mapping virtual resources (memory, devices, etc) to physical ones and handles them with sufficient efficiency and flexibility, there is no need to devise complicated software workarounds. In fact, in Intel and AMD’s new generation of CPU’s with virtualization support, they added support for MMU virtualization and from a recent evaluation paper by VMWare on can see that the second-generation CPUs already perform significantly better than the first generation.

Things I liked and that were interesting

It was interesting to see that from this paper’s results software beats hardware. Usually the perception is that hardware is faster, but in this case of virtualization the software makes it possible to devise workarounds for the few difficult cases while being efficient on most normal cases. In the hardware approach however, the hardware has to be designed to handle all worst-case scenarios (for example throwing traps for all privileged state accesses) and is thus difficult to implement.

It was also interesting to see how virtualization was made possible on the x86 which was not originally designed for virtualization. The technical details on binary translation show that it must have been a very difficult engineering process for VMWare to make virtualization possible on the popular x86 platform.

Limitations and Problems I had

While the paper shows that software virtualization is still superior right now, once hardware manufacturers improve their virtualization support, binary translation might be in danger of becoming superfluous. When hardware naturally implements the complicated mechanisms related to mapping virtual resources (memory, devices, etc) to physical ones and handles them with sufficient efficiency and flexibility, there is no need to devise complicated software workarounds. In fact, in Intel and AMD’s new generation of CPU’s with virtualization support, they added support for MMU virtualization and from a recent evaluation paper by VMWare on can see that the second-generation CPUs already perform significantly better than the first generation.

Tuesday, April 23, 2013

Running Unix V6 in the SIMH PDP-11 simulator

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/09/running-unix-v6-in-the-simh-pdp-11-simulator/ !!!

Just to live out my inner geek, I was experimenting with getting antique Unix versions to run in a simulator recently (admittedly this was also for a class project). I was using the simulators from the SIMH project and got the famous Unix Version 6 running on a PDP 11 simulator.

Related Articles:

Unix in a Nutshell

Prepping for Coding Interviews - Part I

Reading Notes: Ethernet paper by Metcalfe and Boggs

http://thecsbox.com/2013/05/09/running-unix-v6-in-the-simh-pdp-11-simulator/ !!!

Just to live out my inner geek, I was experimenting with getting antique Unix versions to run in a simulator recently (admittedly this was also for a class project). I was using the simulators from the SIMH project and got the famous Unix Version 6 running on a PDP 11 simulator.

Related Articles:

Unix in a Nutshell

Prepping for Coding Interviews - Part I

Reading Notes: Ethernet paper by Metcalfe and Boggs

Monday, April 22, 2013

Reading Notes: Data Center TCP (DCTCP)

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/01/data-center-tcp-dctcp/ !!!

Another interesting paper I read proposing a new version of TCP specifically targeted towards data center's needs, called Data Center TCP (DCTCP). Below are just some of my reading notes.

Things I liked and that were interesting

I liked about the implementation that it was only very few changes in code and only one parameter to adjust. This makes it more convenient for data centers to try out DCTCP. I also found that the authors were very detailed in analyzing the performance of DCTCP, as they designed and ran many experiments to show the superiority of DCTCP. I also liked that the authors were very clear in stating under which conditions DCTCP offers advantage and where it doesn’t, for example by saying that they “make no claims that DCTCP is fair to TCP”

Limitations and Problems I had

I found it very hard to discover limitations in this paper, since I feel the authors have put very much thought into the paper and it is published in SIGCOMM. One issue the authors mention is the problem of synchronization between flows that is caused by the “on-off” style marking of the packets. Another potential point could be the isolation between TCP and DCTCP. The authors mention say that in data centers TCP and DCTCP flows can easily be separated, since load balancers and application proxies separate internal and external traffic. It could be possible that in an actual data center environment problems arise, when TCP and DCTCP packets cannot be properly distinguished (should one issue a specific flag?) or need to be converted to one another.

http://thecsbox.com/2013/05/01/data-center-tcp-dctcp/ !!!

Another interesting paper I read proposing a new version of TCP specifically targeted towards data center's needs, called Data Center TCP (DCTCP). Below are just some of my reading notes.

Things I liked and that were interesting

I liked about the implementation that it was only very few changes in code and only one parameter to adjust. This makes it more convenient for data centers to try out DCTCP. I also found that the authors were very detailed in analyzing the performance of DCTCP, as they designed and ran many experiments to show the superiority of DCTCP. I also liked that the authors were very clear in stating under which conditions DCTCP offers advantage and where it doesn’t, for example by saying that they “make no claims that DCTCP is fair to TCP”

Limitations and Problems I had

I found it very hard to discover limitations in this paper, since I feel the authors have put very much thought into the paper and it is published in SIGCOMM. One issue the authors mention is the problem of synchronization between flows that is caused by the “on-off” style marking of the packets. Another potential point could be the isolation between TCP and DCTCP. The authors mention say that in data centers TCP and DCTCP flows can easily be separated, since load balancers and application proxies separate internal and external traffic. It could be possible that in an actual data center environment problems arise, when TCP and DCTCP packets cannot be properly distinguished (should one issue a specific flag?) or need to be converted to one another.

UDP header explained

A UDP header is used when sending data via the User Datagram Protocol (UDP) in the Transport Layer, as opposed to sending via TCP (Transmission Control Protocol). UDP is connectionless, doesn't guarantee reliable data transfer and has a smaller overhead than TCP. The UDP header is only 8 bytes long (as compared to 20 bytes for a TCP header). Below is the header structure:

- Source Port: specifies the sender's port where replies should be sent to. Set to zero if not used.

- Destination Port: port number of the receiver. While the source port is optional, this one is required.

- Length: length of the entire datagram in bytes, namely 8 bytes for the header + size of the payload. Note that potential outer headers, like IP header should not be counted towards it. Thus here the datagram refers to the UDP datagram

- Checksum: a checksum is calculated over the header plus the data. If no checksum is generated, the checksum field should be all zero. However, it's recommended to use a checksum, and IPv6 in fact requires it. The checksum is calculated via a specific algorithm over the header + data with the checksum field initially set to zero.

Friday, April 19, 2013

Floating Points - an explanation for non computer scientists

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/07/what-are-floating-point-numbers/ !!!

This article is not intended for very proficient programmers or computer scientists, but offers more of an introductory and high level explanation of floating point variables to the hobbyist programmer or high school student who likes to explore computers.

Let's look at Integers first

One of the first variable types you learnt were probably integer variables. With integer variables we can represent integers (i.e. 0, 1, 2, ..., -1, -2, ...). The numbers are represented accurately on the computer, meaning the exact mathematical value can be stored in the computer. However, note that a computer cannot represent the complete infinite set of integers (..., -2, -1, 0, 1, 2, ...) but only a finite subset of it, because a computer's memory is always finite. In C, the two limit values are stored in the constants INT_MIN and INT_MAX.

Getting Real

The limitations of integers are apparent when doing simple divisions like 1 / 3. For code like "int x = 1 / 3" the result would just be rounded down and we'd get 0 for x. This is why computer architects and mathematicians in the early days of computing thought of ways to represent real numbers in the computer. The mathematical set they were interested to do calculations with where the real numbers (containing rational numbers like 1/3 and irrational numbers like pi). The fundamental problem is that the real numbers are an infinite set, but the computer only has finite memory.

Infinity != Infinity ?

Note that with the set of integers, the problem of infinity was solved by specifying two limit values INT_MIN and INT_MAX, such that only the finite subset between those limits can be represented. Now, why can't we just take this approach over to the real numbers? The problem is that the real numbers present a "larger" infinite set than the integers. This was shown in Cantor's famous and lovely proof that the real numbers are an uncountable set whereas integers (and even rational numbers) are a countable set. Anyway, I'm deriving... (I just find these mathematical subtleties very lovely). If you haven't learnt about these concepts before, don't worry. The essential thing we're looking at here is how to represent real numbers in the best possible way on a computer.

http://thecsbox.com/2013/05/07/what-are-floating-point-numbers/ !!!

This article is not intended for very proficient programmers or computer scientists, but offers more of an introductory and high level explanation of floating point variables to the hobbyist programmer or high school student who likes to explore computers.

Let's look at Integers first

One of the first variable types you learnt were probably integer variables. With integer variables we can represent integers (i.e. 0, 1, 2, ..., -1, -2, ...). The numbers are represented accurately on the computer, meaning the exact mathematical value can be stored in the computer. However, note that a computer cannot represent the complete infinite set of integers (..., -2, -1, 0, 1, 2, ...) but only a finite subset of it, because a computer's memory is always finite. In C, the two limit values are stored in the constants INT_MIN and INT_MAX.

Getting Real

The limitations of integers are apparent when doing simple divisions like 1 / 3. For code like "int x = 1 / 3" the result would just be rounded down and we'd get 0 for x. This is why computer architects and mathematicians in the early days of computing thought of ways to represent real numbers in the computer. The mathematical set they were interested to do calculations with where the real numbers (containing rational numbers like 1/3 and irrational numbers like pi). The fundamental problem is that the real numbers are an infinite set, but the computer only has finite memory.

Infinity != Infinity ?

Note that with the set of integers, the problem of infinity was solved by specifying two limit values INT_MIN and INT_MAX, such that only the finite subset between those limits can be represented. Now, why can't we just take this approach over to the real numbers? The problem is that the real numbers present a "larger" infinite set than the integers. This was shown in Cantor's famous and lovely proof that the real numbers are an uncountable set whereas integers (and even rational numbers) are a countable set. Anyway, I'm deriving... (I just find these mathematical subtleties very lovely). If you haven't learnt about these concepts before, don't worry. The essential thing we're looking at here is how to represent real numbers in the best possible way on a computer.

Thursday, April 18, 2013

How to prepare for Programming Interviews - Part II

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/03/how-to-prepare-for-programming-interviews-part-ii/ !!!

I wrote a more general article about how to prepare for coding interviews here. This article talks about how you should work through each individual programming question. I recommend working with the book "Cracking the Coding Interview"

Related Articles:

How to prepare for Programming Interviews - Part I

Should I study Computer Science?

Unix in a Nutshell

http://thecsbox.com/2013/05/03/how-to-prepare-for-programming-interviews-part-ii/ !!!

I wrote a more general article about how to prepare for coding interviews here. This article talks about how you should work through each individual programming question. I recommend working with the book "Cracking the Coding Interview"

Related Articles:

How to prepare for Programming Interviews - Part I

Should I study Computer Science?

Unix in a Nutshell

Wednesday, April 17, 2013

Reading Notes: Ethernet paper by Metcalfe and Boggs

The paper "Ethernet: Distributed Packet Switching for Local Computer Networks" by Metcalfe and Boggs is THE paper describing Ethernet technology. It appeared in the Communications of the ACM in 1976. Nowadays most wired LANs use Ethernet technology, so its importance is paramount. Below are some of my reading notes.

Synchronization on the Ethernet

In section 6 the author mentions slots as a time unit. In a contention interval the number of slots is calculated to determine how long the station will wait. Furthermore the author explains that the slots are synchronized by the tail of the preceding acquisition interval. In section 4.4 the author defines slots as the maximum time beween starting a transmission and detecting a collision, which is one end-to-end round trip delay. Thus the synchronization via slots depends on the specific physical properties of that network and one can regard slots as the basic time unit for synchronization.

Synchronization on the Ethernet

In section 6 the author mentions slots as a time unit. In a contention interval the number of slots is calculated to determine how long the station will wait. Furthermore the author explains that the slots are synchronized by the tail of the preceding acquisition interval. In section 4.4 the author defines slots as the maximum time beween starting a transmission and detecting a collision, which is one end-to-end round trip delay. Thus the synchronization via slots depends on the specific physical properties of that network and one can regard slots as the basic time unit for synchronization.

Tuesday, April 16, 2013

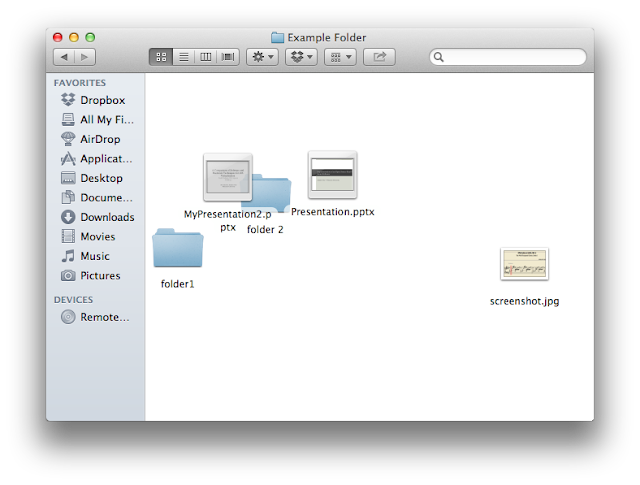

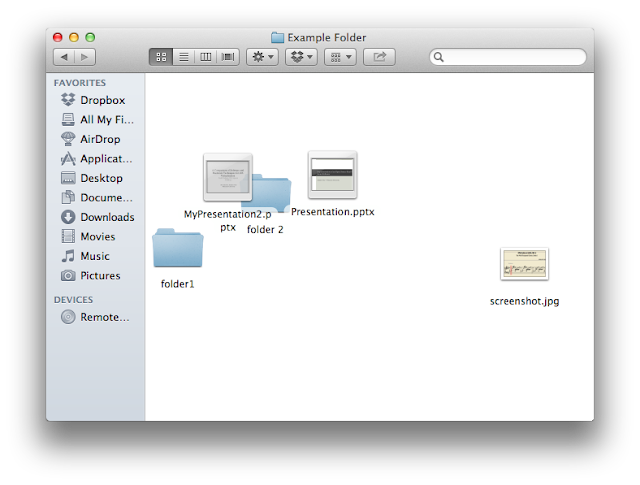

How to let items snap-to-grid by default in Finder?

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/04/finder-items-snap-to-grid-by-default/ !!!

Are you also utterly annoyed that the items in the Finder don't appear in an ordered grid by default, but instead position themselves wherever they were dragged to in the Finder in Mac OS X? This is quite annoying, especially because when making the window smaller, the items don't rearrange themselves but instead hide themselves behind the current window size. Moreover, different items tend to overlap and in general it just makes for a very cluttered appearance.

The solution is to let items sort by name. While commonly also referred to as snap-to-grid, I have found that selecting that option only aligns the items in the grid, but not always in an ordered fashion.

http://thecsbox.com/2013/05/04/finder-items-snap-to-grid-by-default/ !!!

Are you also utterly annoyed that the items in the Finder don't appear in an ordered grid by default, but instead position themselves wherever they were dragged to in the Finder in Mac OS X? This is quite annoying, especially because when making the window smaller, the items don't rearrange themselves but instead hide themselves behind the current window size. Moreover, different items tend to overlap and in general it just makes for a very cluttered appearance.

The solution is to let items sort by name. While commonly also referred to as snap-to-grid, I have found that selecting that option only aligns the items in the grid, but not always in an ordered fashion.

Monday, April 15, 2013

Reading Notes: A Protocol for Packet Network Intercommunication

The paper "A Protocol for Packet Network Intercommunication" by Cerf and Kahn is THE paper about TCP/IP. I'd recommend it to everyone interested in computer networks and specifically TCP/IP. If you have access to the ACM digital library, you can read it here. Below are just some reading notes that can serve as a thinking point.

The paper’s Figures 3 and 4 show and internetwork packet format and a TCP address, respectively. Compare the current ideas of these with the paper’s. What has survived, been discarded, been enlarged or altered, been added?

In the paper the distinction between IP and TCP header is not that clear, or at least not in the way it is today. The internetwork header corresponds to the IP header while the segment format in Fig. 6 corresponds to the TCP header.

Things that are the same

The paper’s Figures 3 and 4 show and internetwork packet format and a TCP address, respectively. Compare the current ideas of these with the paper’s. What has survived, been discarded, been enlarged or altered, been added?

In the paper the distinction between IP and TCP header is not that clear, or at least not in the way it is today. The internetwork header corresponds to the IP header while the segment format in Fig. 6 corresponds to the TCP header.

Things that are the same

- Source and destination address in the IP header.

- Source and destination port in the TCP header.

- Packet encapsulation with a local header outside the IP header.

- The notion of gateways, and that routing is performed based on the destination address. Essentially corresponds to lookups in a routing table to determine the next hop ip.

Sunday, April 14, 2013

How to prepare for Programming Interviews - Part I

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/03/how-to-prepare-for-programming-interviews-part-i/ !!!

Are you a computer science student and currently worried about how best to prepare for your upcoming programming interview? Then hopefully this article where I'm sharing some of my experiences will help you. I have spent extensive time preparing for programming interviews recently and interviewed with Google, Facebook and Microsoft. In the end I decided to go to Facebook for this summer *yay* ;-)

Related Articles:

How to prepare for Programming Interviews - Part II

Should I study Computer Science?

http://thecsbox.com/2013/05/03/how-to-prepare-for-programming-interviews-part-i/ !!!

Are you a computer science student and currently worried about how best to prepare for your upcoming programming interview? Then hopefully this article where I'm sharing some of my experiences will help you. I have spent extensive time preparing for programming interviews recently and interviewed with Google, Facebook and Microsoft. In the end I decided to go to Facebook for this summer *yay* ;-)

Related Articles:

How to prepare for Programming Interviews - Part II

Should I study Computer Science?

Saturday, April 13, 2013

Macbook can't connect to wireless internet

I have had this strange issue with campus wireless access several times that my iMac or Macbook Air can't connect to the internet, but a Windows machine or my smartphone would connect just fine. The solution that worked for me was going into the Library folder and deleting the SystemsConfiguration folder.

Steps

After rebooting it finally connects to the wireless internet, and the SystemConfiguration folder is also recreated, so you don't need to worry about that.

Steps

- Go into your hard disk folder, which is usually Macintosh HD.

- Then in your hard disk, go into the Library folder and then into the Preferences folder.

- Delete the SystemConfiguration folder in there.

- Reboot

After rebooting it finally connects to the wireless internet, and the SystemConfiguration folder is also recreated, so you don't need to worry about that.

Wednesday, April 10, 2013

Should I study Computer Science?

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/03/how-to-prepare-for-programming-interviews-part-ii/ !!!

This is an article that I’ve always been wanting to write. I hope it can help high school students thinking about their college majors or undergraduates who still have to choose majors. About myself, I’m a CS grad student at Princeton and will be interning at Facebook. While I can’t speak much about working as a software engineer yet, I do have my fair share of experience with a 5 years computer science education so far and with being passionate about technology since middle school. Nevertheless, this article just reflects my own experiences and opinions.

First things first

If you just *know* that studying computer science is what you’re meant to do, then really, you don’t even need to read this article :-) I feel it’s like with entrepreneurship and starting a company, some people just have to do it, because for them, that’s what they are meant to do. On the other hand, if you’re not sure, then this article offers some discussion, hoping to be helpful in your decision process. But that’s really all it can do. No one can create that inherent desire for you.

These things are a plus

A love/passion for something in technology is very important. Maybe you have played around with Linux, or done some Web design, or you want to know how computer games work, or anything related to technology where you played around, explored it and really liked the experience. You don’t necessarily have to know how to program, but a desire to study technical things and some unexplainable attraction and fascination by tech can go a long way. For me it was Linux. I just really loved playing around with different distros, tweaking the system, compiling my own kernel, etc. I wouldn’t consider myself a programmer yet at that time, but I could already feel my affinity for technology and experienced the joy I had when playing around with things.

http://thecsbox.com/2013/05/03/how-to-prepare-for-programming-interviews-part-ii/ !!!

This is an article that I’ve always been wanting to write. I hope it can help high school students thinking about their college majors or undergraduates who still have to choose majors. About myself, I’m a CS grad student at Princeton and will be interning at Facebook. While I can’t speak much about working as a software engineer yet, I do have my fair share of experience with a 5 years computer science education so far and with being passionate about technology since middle school. Nevertheless, this article just reflects my own experiences and opinions.

First things first

If you just *know* that studying computer science is what you’re meant to do, then really, you don’t even need to read this article :-) I feel it’s like with entrepreneurship and starting a company, some people just have to do it, because for them, that’s what they are meant to do. On the other hand, if you’re not sure, then this article offers some discussion, hoping to be helpful in your decision process. But that’s really all it can do. No one can create that inherent desire for you.

These things are a plus

A love/passion for something in technology is very important. Maybe you have played around with Linux, or done some Web design, or you want to know how computer games work, or anything related to technology where you played around, explored it and really liked the experience. You don’t necessarily have to know how to program, but a desire to study technical things and some unexplainable attraction and fascination by tech can go a long way. For me it was Linux. I just really loved playing around with different distros, tweaking the system, compiling my own kernel, etc. I wouldn’t consider myself a programmer yet at that time, but I could already feel my affinity for technology and experienced the joy I had when playing around with things.

Sunday, April 7, 2013

Unix in a Nutshell

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/04/unix-in-a-nutshell/ !!!

I recently read the original paper on Unix: “The Unix Time-Sharing System” (Ritchie, Thompson, 1974), and I’ve long been a Unix and Linux fan, so I thought I’ll take this occasion to write an article about what I consider are Unix’s main contributions and what makes it so interesting.

A little bit of history first...

Unix was written by Dennis Ritchie and Ken Thompson in the famous AT&T Bell Labs during the 1970s. The original hardware they used was a PDP-7 and the first version was written in assembly. This may sound incredible today, but at that time not many high level languages existed and it was natural to write something as complex and performance sensitive as an operating system in assembly. Unix is also being accredited for being the first widespread general-purpose operating system. While operating systems existed before Unix, they were usually produced by the hardware manufacturer and were thus very heterogeneous. The idea of having a separate OS provider and to have one OS support different hardware did not really exist before Unix.

Contributions of Unix

I consider the following points the most important contributions of Unix:

http://thecsbox.com/2013/05/04/unix-in-a-nutshell/ !!!

I recently read the original paper on Unix: “The Unix Time-Sharing System” (Ritchie, Thompson, 1974), and I’ve long been a Unix and Linux fan, so I thought I’ll take this occasion to write an article about what I consider are Unix’s main contributions and what makes it so interesting.

A little bit of history first...

Unix was written by Dennis Ritchie and Ken Thompson in the famous AT&T Bell Labs during the 1970s. The original hardware they used was a PDP-7 and the first version was written in assembly. This may sound incredible today, but at that time not many high level languages existed and it was natural to write something as complex and performance sensitive as an operating system in assembly. Unix is also being accredited for being the first widespread general-purpose operating system. While operating systems existed before Unix, they were usually produced by the hardware manufacturer and were thus very heterogeneous. The idea of having a separate OS provider and to have one OS support different hardware did not really exist before Unix.

Contributions of Unix

I consider the following points the most important contributions of Unix:

- file system and file I/O

- greatly assisted the widespread use of C

- focus on simplicity and elegance in its design

Saturday, April 6, 2013

What's the difference between TCP and UDP?

!!! Attention: this article (and in fact my whole blog) have moved to site:

http://thecsbox.com/2013/05/02/difference-between-tcp-and-udp/ !!!

This article is intended for computer science students who are currently learning about network protocols, developers seeking to refresh their networking knowledge or simply for people interested in computer networks.

Related Articles:

Reading Notes: A Protocol for Packet Network Intercommunication

Reading Notes: Ethernet paper by Metcalfe and Boggs

Unix in a Nutshell

http://thecsbox.com/2013/05/02/difference-between-tcp-and-udp/ !!!

This article is intended for computer science students who are currently learning about network protocols, developers seeking to refresh their networking knowledge or simply for people interested in computer networks.

Related Articles:

Reading Notes: A Protocol for Packet Network Intercommunication

Reading Notes: Ethernet paper by Metcalfe and Boggs

Unix in a Nutshell

Subscribe to:

Posts (Atom)